Leonor Ciarlone, Mary Laplante, Senior Analysts, The Gilbane Group, November 2007

Sponsored by Sajan

You can also download a PDF version of this whitepaper (15 pages).

Table of Contents

A Quality-Driven Global Content Lifecycle: Elusive but Achievable

Improving the Quality Quotient: Connecting People with Content

Empowering Translation Project Managers

Maintaining the Quality Quotient: Connecting People with People

Measuring the Quality Quotient: Connecting Globalization with Customer Satisfaction

The Quality Quotient in Practice

Unique Dimensions of Quality, Universal Application of Technology

Creating a Blueprint for a Quality Strategy

Conclusions: The Gilbane Group Perspective

Executive Summary

The “Information Age” has ended. Since the 80’s, organizations have been working diligently toward the premise that multi-channel availability of information will be the foundation of business operations. This prediction has indeed become true, continuously demonstrated by intrinsic links between employee productivity, customer satisfaction, and accessible information. It has also been widely-emphasized by executives across every industry with declarations such as, “For the first time in 300 years, the very nature of banking has changed. We still handle money, but information, not money, is now the lifeblood of our industry.”[1.]

We are well into the Global Information Age, in which mere information availability no longer suffices. This is a period of distinct customer expectations that demand relevant information that is culturally acceptable, appealing, and most important, understood. Context has undergone a significant metamorphosis, redefining the meaning of “ease of use” as applied to communications, software applications, and consumer products. Delivering contextual, multilingual information – communications that make sense in the customer’s language of choice – is fundamental. Translation is a corporate requirement.

In and of itself however, the act of translation provides no “certificate of excellence,” no guarantee of quality. It is inarguably both an art and a science, driven by the knowledge of people, supported by the efficiency of processes, and facilitated by the power of technology. It is difficult to measure when it is done effectively, but devastatingly obvious when it is not. Quality translation, then, is also a corporate requirement.

Fusing quality and translation is a significant part of the formula for success in a global economy. Organizations with existing or expanding multinational revenue profiles understand the needfor quality translation. During interviews with The Gilbane Group, no one organization claimed to be an expert in achieving this goal or having a foolproof method to do so. Yet all organizations agreed that the “idea” of quality is the basis for continuous improvements within the global content lifecycle. The obvious next question – What is quality and how will organizations know when they achieve it? We offer the following:

Quality depends on the ability of knowledge workers,

in this case writers and translators,

to produce products that satisfy the customer.

The quality quotient is the level of satisfaction

a customer achieves when using those products.

Improving, maintaining, and measuring the quality quotient of products for the Global Information Age is a daunting, if near impossible goal, if translation is not an intrinsic part of the global content lifecycle. Just as organizations found that multi-channel output could not be treated as an after-thought in the Information Age, it is clear that translation cannot be treated as such now.

Quality and translation can be synonymous. And achieving quality can be elusive, but it is not magic. Interlocking people, process, and technology “ingredients” forms the basis of a quality quotient that defines itself according to global customer expectations and then, increases based on global customer experiences.

A Quality-Driven Global Content Lifecycle: Elusive but Achievable

At the end of the day, quality depends on the ability of knowledge workers to produce products that satisfy customers. When customers respond positively to products, companies benefit from increased levels of satisfaction and loyalty. When customers respond negatively, companies experience increased support requests, declining customer bases, and ultimately, the erosion of brand equity.

So, at its simplest form, the fundamental definition of quality is customer satisfaction. Whether one subscribes to the philosophies and methodologies of Crosby, Deming, Drucker, or Juran, each “quality guru,” shares a common perspective: produce results or products that meet or exceed customer expectations.

Corporate communications, whether in the form of marketing collateral, user documentation, or training materials, are information-centric products. As such, they have a direct influence on customer satisfaction and in turn, a mandate for quality. These customer-facing materials are also under the same globalization pressures as the products they support. In fact, we are well into the Global Information Age, in which mere information and product availability no longer suffices.

The American Translators Association estimates that the worldwide market for translation and interpretation services is approximately $13 billion, growing at an annual rate of about 15% through 2010[2.] . Speak in your customers’ language. Provide contextual, customer experience. The global economy demands it – and the content lifecycle must accommodate it. It is a daunting, if near impossible goal however, if translation is not an intrinsic part of the lifecycle. When translation is an after-thought, improving its quality quotient – the level of satisfaction a customer achieves when using information-centric products – is unachievable.

Like any other critical business process, translation is highly dependent on the methods and technologies that enable knowledge workers to get the job done with accuracy and efficiency. Quality does not happen by default. Rather, it is a result of a people, process and technology approach that supports the flow of content throughout the global content lifecycle. Hence, looking for ways to maximize the quality quotient during the lifecycle is critical.

Understanding the optimum stages at which workers have an opportunity to introduce quality improvements is a fundamental place to start. Designing and implementing repeatable and measurable processes is next. Then, technology can then do its job by:

- Empowering writers, translators, and subject matter experts with interactive, collaborative tools.

- Connecting processes with the data sources required to complete tasks.

- Replacing redundancy and inefficiency with automation and flexibility.

- Providing scalability as source and translated content volume increases.

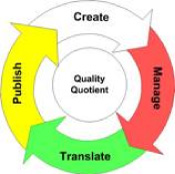

In general, the recipe for an ever-increasing quality quotient looks like this:

| People | Process | Technology |

| Collaboration | Agile | Architectural integrity |

| Cultural usability | Consistent | Interoperability |

| Leadership | Defendable | Reliability |

| Productivity | Measurable | Scalability |

| Recognition and reinforcement | Predictable | Systematic automation |

| Repeatable | Transparency | |

| Competitive Advantage | ||

| Innovation | ||

| Value |

The global economy continues to provide organizations with tangible opportunities for revenue growth and brand expansion. The pressure to decrease time to market is a natural result. However, customer expectations include the desire for multilingual communications and interactions that make sense.

Now is the time to increase the quality quotient across the global content lifecycle in ways that drive efficiencies and reduce costs. Methodologies such as Six Sigma, Total Quality Management (TQM) and the LISA QA Model from the Localization Industry Standards Association are of great value to organizations with process re-engineering in mind. This whitepaper includes a Resources Appendix for more information on quality methodologies and technology standards.

The following sections focus on the value of technology in the global content lifecycle as it relates to improving the quality quotient of information-centric products. Certainly, every organization has unique processes that present unique opportunities for improvement based on the needs of workgroups and individuals. However, the significance of technologies such as enriched authoring, terminology management, translation memory, contextual search and retrieval, and API-level integration is universal. Each helps in:

| Improving the Quality Quotient | |

| Maintaining the Quality Quotient | |

| Measuring the Quality Quotient |

Improving the Quality Quotient: Connecting People with Content

Information-centric products deliver customer satisfaction only when communication is:

| Accessible | Complete |

| Accurate | Consistent |

| Clear | Timely |

As such, a critical step in improving the quality quotient of these products is empowering the knowledge workers involved in the creation of source and translated content with technologies that help them get the job done. Source content authors, translators, and translation project managers all need to connect with the right content – as well as with each other – to affect the quality quotient.

Learning to “bake in” quality during content creation is an iterative process; it focuses directly on the beginning of the global content lifecycle. Here’s where the phrase “quality in, quality out” makes perfect sense. Quality at the source is the objective. Reduced costs, faster development and approval cycles, and decreased time to market is the outcome.

Empowering Source Authors

Technical and marketing writers are often the forgotten heroes of the global content lifecycle. Challenged in the Information Age by the need to single-source information for multi-channel delivery, they must now re-define “multi-channel” by accounting for translated versions of print, electronic, and online help deliverables.

According to Gilbane Group research, there is a significant decline in companies that are translating information-centric products to only 1-3 languages. In fact, companies report an average increase of translations outputs from three to ten languages by 2008. That’s more than doubling an organization’s “translation capacity” in a short amount of time. Furthermore, many companies are producing materials in well over 21 languages already.

Source content authors require assistance. And they need it yesterday. From a technology perspective, a content or document management solution is a must. A mature industry, most solutions have proven capabilities to support source control and versioning, single-sourcing to multiple outputs, and author-driven workflow.

But, it’s not enough. In fact, these capabilities alone cannot guarantee quality across the global content lifecycle without the means for source authors to access:

- Reusable content directly from common authoring and page layout environments

- Corporate guidelines for approved source and translated terminology

- Translation management systems that contain synchronized multilingual versions of the source content

The common theme here is enterprise-wide integration. Certainly, it can be a hard problem to solve, especially in globally-dispersed organizations. However, a growing recognition of the challenges in a global content lifecycle impels the software industry to meet the demand for more holistic, integrated approaches to the creation of source and translated content.

For source content authors, the quest to manage the chaos is starting to unearth solid technology-driven assistance. It is encouraging to see interactive, non-invasive and integrated technologies that help source authors identify and apply reusable content from both structured and unstructured authoring environments. It is empowering when the approach puts the control in the hands of the author, by suggesting appropriate actions rather than demanding them.

In addition, source content authors can be confident that the efforts put into corporate style guides are not wasted. Many an hour is spent on the creation of standard glossaries as well as rules for using trademarks, product names, and phrases that support brand messaging. Unfortunately, “double-time” is spent on editing and re-working materials to fix disregarded standards.

Terminology management (TM) technologies, available for a number of years, but usually inaccessible to source content authors, is taking its rightful place on the desktop. Solutions that understand its power to increase the quality quotient are using the Internet as a conduit to provide centralized and secure Web access to TM databases.

Last, but absolutely not least, is the trend toward the integration of content and document management with full-fledged globalization solutions and translation memory technologies. This is a powerful concept, let alone a much-needed union of siloed solutions that encourage redundancy and drive up development costs. Intelligent integration is key in this area, which we define as a focus on fusing workflows, search and retrieval, as well as reporting. The advantages for source content authors are compelling, especially when empowered with the ability to submit and track translation projects directly from content or document management environments.

Empowering Translators

Like their counterparts in technical and marketing workgroups, translators are often forgotten champions. In many industries, priority of resources and budget for information-centric products is akin to “the caboose on a high-speed train.” Gilbane Group interviewees describe making improvements within their workgroups as “changing the tire while driving 100 mph!”

Unfortunately, the translation process often follows the same path, but farther downstream. Analogous to the support railing at the end of the caboose, “a day in the life of a translator” can be arduous to say the least. Trained to think international from the start, translators are a critical resource for achieving the quality at the source. Examples of inaccurate and downright insulting translations are extensive. Although comical for a good-natured “joke of the day,” offending customers is not.

Translators require assistance. And they need it yesterday. If this sounds familiar to the prose used in the previous section, it is intentional. From a technology perspective, tools such as translation memory and terminology management have been available since the mid-1990’s. Despite this, they are often used in isolation from source content authoring tools as well as content or document management technologies.

Back to the subject of intelligent integration again. Although most organizations understand that siloed process and technologies are risky, synchronizing source authors and translators has not been a high priority. They always seem to get the job done somehow. While it is true that the software industry has also been slow to accommodate this need, the trend toward technology integration is happening, as discussed above.

In addition to integration, there is potential to help translators ensure quality and improve productivity with more technology-driven assistance, including:

- Flexibility to access translation memory while using preferred translation tools.

- Wider support for standards such as TMX, an XML standard for the exchange of translation memory, to enable consistently interoperable technology solutions.

- Organization-defined metadata on country-language pairs that helps translators make decisions about the contextual applicability of phrases or sentences.

- Accessible, tightly-coupled search and retrieval technologies that do more than identify “similarities” between new and previous translations.

In the Global Information Age, communicationsin the customer’s language of choice is fundamental. Competitive advantage however, is communications that make sense according to culture and dialect as well as industry and corporate-specific requirements. The ability to achieve “sense” rests within the environments and technologies that help translators do their job.

Empowering Translation Project Managers

The majority of organizations outsource translation projects to Language Service Providers (LSPs) to benefit from the dedicated expertise within this community. This approach however, does not eliminate the need for translation project managers within organizations that buy language services.

Staying “out of the loop” of any outsourced project is never a good idea. As the LSP market continues to evolve and consolidate due to significant merger and acquisition activity, throwing projects over the wall and assuming that quality comes back is risky to say the least.

In some organizations, translation project management is an individual or group of distinct, centralized resources. In others, the task simply merges into already-overloaded technical writers or product managers. Even those with the utmost confidence in their LSP relationship, and many organizations have several, need to deflect the risks of poorly coordinated translation projects. For highly-regulated industries such as pharmaceutical, medical device manufacturers, and biotechnology, the risk can quickly transform into significant costs due to fines or prosecution.

On time, quality translation is the objective, despite the challenges of tracking costs, project workflows, and results. Ensuring collaboration across siloed processes is an issue large enough to merit its own section, discussed in Maintaining the Quality Quotient: Connecting People with People. Before tackling collaboration issues, an internal translation project manager needs access to an environment with real-time, usable data on the translation project lifecycle.

The good news is that the use of project management and workflow tools is well established in the LSP community. Historically, the problem has been an organization’s visibility into the data captured in these environments. Back to the subject of intelligent integration yet again. In this case, it is not necessary to reinvent the wheel if your LSP provides project management data. To ensure quality across the global content lifecycle, it is necessary to access and utilize that data for continuous improvement and regulatory compliance.

Technology such as the following can empower internal project managers with the tools they need to ensure quality and accuracy while simultaneously controlling costs:

- Project metadata such as estimated and actual costs as well as language-specific status information.

- Content metadata at the phrase and/or sentence level such as version, time and date stamps, and author attribution.

- Linguist metadata that helps the project manager understand the level and value of translator expertise, including certifications and industry or locale expertise.

- Web-accessible reporting capabilities based distinct audit trails.

These technology-driven approaches can eliminate the need for project managers to act as human resource representatives while trying to meet tight deadlines. These resources do not usually have the bandwidth to find and sample the work of in-country linguists and subject matter experts. Constantly pressured to reduce costs translation project managers desperately need visibility into outsourced processes. In essence, the abyss between estimated and actual costs should simply not exist; the ability to manage multiple translation projects involving multiple languages should not be impossible.

Maintaining the Quality Quotient: Connecting People with People

The need for workgroup collaboration is universal. The need to collaborate within the global content lifecycle is essential. Certainly, the work may “get done” with siloed, ad-hoc approaches to translation and localization efforts. However, it is usually redundant, expensive, and risky.

As organizations expand multinational revenue profiles, such approaches simply cannot scale to accommodate increasing content volumes, faster product development and release cycles, and larger groups of globally dispersed writers, translators, and translation project managers. Translators who do not ask questions are dangerous. Technical and marketing writers who anonymously transfer files for translation, but never talk to their project management and translator counterparts are equally so. Both situations are hazardous to brand management health.

The mission is clear: bridge the divide between the people, processes and technologies that prevent collaboration during the global content lifecycle. An ability to increase the quality quotient requires on it; customer satisfaction depends on it. From a technology perspective, the goals for “connecting people with content” and “connecting people with people” share two central themes: visibility and context. It is not enough to provide a generic collaboration infrastructure that remains dependent on email and phone interactions or “lights out” workflow that lacks the ability to make in-flight adjustments.

Collaboration technologies that have any chance of increasing the quality quotient during the global content lifecycle are proactively tuned to anticipate and facilitate the right interactions at the right time. “Teamwork” should not be an oxymoron. In a traditional content lifecycle, technology supports a basic publishing model: create, approve, distribute. In a global content lifecycle, technology supports collaborative, iterative creation and translation cycles that depend on reusable content. For example,

- Translators need to share information about their qualifications, expertise, and availability, with translation project managers.

- Translation project managers, whether internal or LSP-based, need to share project schedules with writers and product managers to define the cost and timing of language outputs.

- Writers and translators need to share project information as well as cultural insight during the content creation, transfer, and management.

In essence, those involved in ensuring quality at the source need closer relationships. The trick is enabling an automated, yet facilitative environment that addresses role-based requirements rather than generic ones. Translation is a complex process in and of itself; it always includes internal and external workgroups and usually includes services-based resources. There are always multiple projects and numerous information flows in progress at any given time.

Information overload is not the goal. Rather, the aim is to leverage a centralized, intelligently organized, and accessible source and translated content infrastructure for on demand interactions and context-driven workflow. As the power of the team to communicate and share increases, so too does the quality quotient. Technology-driven assistance can help with the need to achieve visible, contextual collaboration by providing:

| Knowledge Worker | Technology Impact |

| Source authors |

|

| Translators |

|

| Content approvers |

|

| Translation project managers |

|

As this table shows, all the resources that drive the global content lifecycle can benefit from a common set of technology capabilities for visible, contextual collaboration. On demand access to the terminology management database, contextual search and retrieval, the ability to work offline, and project-based electronic sign-off forms fall into this category. Project analytics and reporting, the key to measuring and raising quality quotient levels, is a universal requirement as well, and large enough to merit its own section. Measuring the Quality Quotient: Connecting Globalization Strategies with Customer Satisfaction discusses the role of these technologies.

On the other hand, each resource has different requirements for the level of project information they need to access and use. For example, individual source authors often require exclusive coordination with their translator counterparts for task coordination or parallel authoring. Conversely, translation project managers need access to comprehensive project status with the ability to drill-down to task assignments or make in-flight process adjustments as necessary. In a visible, contextual collaboration environment, getting the right information to and from the right people maintains the quality quotient by keeping people coordinated, focused, and connected.

Measuring the Quality Quotient: Connecting Globalization with Customer Satisfaction

Quality can be hard to define and measure by those create, manage, translate and publish content. Ironically, though, customers can easily and immediately inadequate content when they read it.

Quality depends on the ability of knowledge workers,

in this case writers and translators,

to produce products that satisfy the customer.

The quality quotient is the level of satisfaction

a customer achieves when using those products.

This paper has discussed improving and maintaining the quality quotient of global content throughout its lifecycle by focusing on people, processes, and technology. It is important to understand, however, that the efforts described are not effective without make tangible commitments to measuring the quality quotient of translated products and then, optimizing it over time.

Customer satisfaction is obviously the end goal. However, the concepts of “satisfaction” and quality are both art and a science. They are inherently subjective, based on a myriad of factors including expectations, reactions, perceptions, and most significant to the quality quotient of global content, culture. Measuring these factors can be elusive, but it is not impossible.

One of the most useful ways of approaching global content lifecycle measurement is with a quality quotient scale. Developed according to company-specific and universal factors,a quality quotient scale uses “Customer Satisfaction Units” (CSU) to measure the effectiveness and relevance of global content.

There are many ways to define CSUs; there is no “golden copy” of a single formula. Some factors are abstract and some concrete; some are scientific and some are emotional; some are simple and some are complex. Collectively however, there is a fundamental set of CSUs for a quality quotient scale. Encompassing three major categories, each requires a shared enterprise understanding of the items to be measured as well as how they are measured:

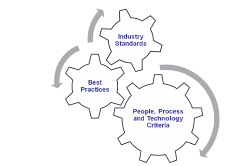

“Turning up the volume” in any one or all of these categories increases the quality quotient, or customer satisfaction, associated with products your organization delivers. First and foremost, it requires interlocking people, process, and technology “ingredients” based on your customer’s requirements and expectations and then, increasing their impact based on customer behaviors and experiences. Collaborative groups of writers, translators, product managers, brand managers can all help in developing these criteria. The group need not accomplish this in isolation; rather, they should have access to and utilize best practices from other organizations as well as industry standards for content and translation management.

The “people, process, and technology” mantra has been a significant discussion throughout this white paper. Within this principle, the collaborative development and in turn, the shared understanding of a company’s multinational revenue goals as well as its customer demographics and expectations is critical. Focusing on quality-driven processes that bridge “workgroup divides” and “technology silos” is equally so.

The good news is that a number of globalization challenges are universal, regardless of industry. Many organizations are sharing their stories within communities designed to help companies learn from each other. Gilbane Group provides free access at https://gilbane.com to compelling stories on tackling and overcoming the challenges of a truly global content lifecycle.

In addition, methodologies such as those from Six Sigma, Total Quality Management (TQM), LISA’s QA Model, the European Quality Standard for Translation Services, ISO, and ASTM are all of great value to all organizations. Industry-specific help is also available in areas such as automotive, medical device manufacturing, and construction. Finally, technology standards such as TMX, TBX, XLIFF, and DITA are facilitating success for a number of organizations. This whitepaper includes a Resources Appendix for more information on quality methodologies and technology standards.

The Quality Quotient in Practice

Readers of this paper live with the pressures of the Global Information Age, as discussed throughout:

- Information availability, by itself, is no longer sufficient. Information must also be accessible in the customer’s preferred language.

- Translation, by itself, is no longer sufficient. Translated content must also be of the highest quality in order to ensure customer satisfaction with information-centric products.

The risks of not implementing a quality strategy and ongoing improvement are onerous: lack of revenue growth, compromised success of global expansion plans, decrease in brand loyalty, increased translation costs as outmoded processes do not scale, and increased costs of customer support directly attributable to poorly translated business content.

How can a company begin implementing a quality enhancement program today? The quality quotient principles described in the paper can provide a useful starting point for shaping the enterprise dialog and, possibly, developing strategies and tactics for addressing content quality issues.

Unique Dimensions of Quality, Universal Application of Technology

We’ve defined the quality quotient as the level of satisfaction that a customer achieves when using information-centric products. Every company gauges customer satisfaction in a way unique to its business, and so has unique opportunities for improving quality. For some, quality might be measured as volumes of calls to a support desk; greater re-use of standard corporate terminology can result in lower call volume as content becomes less confusing. For others, quality might be measured as a rate of onboarding new customers in a region of global expansion; greater collaboration between content creators, translators, and project managers can result in better web forms that are consistently user-friendly.

While the dimensions of the quality quotient might be particular to an individual organization, the application of technology can deliver quality benefits to any enterprise. A good starting point, then, for an organized approach to improving quality is to focus initially on the impact of technologies that enable knowledge workers to produce more and better content that satisfies customers—while also introducing process efficiencies and reducing costs along the way. Those technologies include enriched authoring, terminology management, translation memory, contextual search and retrieval, and API-level integration, as discussed in this paper.

Creating a Blueprint for a Quality Strategy

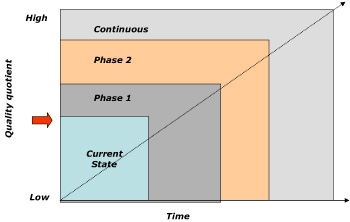

Successful business initiatives follow a similar arc. To grossly oversimplify, we set a goal, determine current state, identify and implement incremental changes and success criteria, measure success against metrics, reach the goal, and monitor and change for continuous improvement.

The figure below outlines a general model, with the quality quotient moving from low to high over the lifetime of a program of systematic enhancements.

There are as many approaches to phasing quality enhancements, as there are enterprises who are implementing them. Any company’s quality strategy is influenced by most pressing quality problem, budget, even constraints within the existing technology infrastructure. The following scenarios are offered only as examples of how organizations might think about applying technology in phases.

- Map the enhancements to phases in the global content lifecycle—create, manage, translate, and publish. The organization might introduce enriched authoring capabilities as part of the first phase, ensuring that knowledge workers who create content are connected with content sources that enable consistency and reuse. Access to a corporate guideline on approved product name conventions during the content creation, for example, allows an author to improve the “translation readiness” of text. In phase two of the quality enhancement program, the enterprise might bring in technology that addresses the management phase of the global content lifecycle—perhaps integration with a content management system, for example. Each new introduction of technology is designed to ratchet up the quality quotient and deliver a more satisfying global customer experience through better content.

- Map the enhancements to processes specific to improving, maintaining, and measuring the quality quotient. The organization might introduce technologies in phases that are based on the core concepts presented in this paper. First, connect people with content (improve the quality quotient). Second, connect people with people (maintain the quality quotient). Third, connect globalization strategies with customer satisfaction (measure), as well as other corporate business objectives. Then, of course, optimize on an ongoing basis through good governance of the solution and the people, process, and technologies comprising it. The specific technologies that can be implemented in each of the “Improving,” “Maintaining,” and “Measuring” phases are described in these sections of the white paper.

The development of high-level problem scenarios can often be used to help stakeholders identify and prioritize the problem areas that are ripe for fixing or optimizing. For example, a multi-national manufacturer of medical equipment experiences a greater-than-normal increase in the number of calls to customer service after extending distribution of a new product into a new territory. A common problem is confusion between instructions in the documentation shipped with the product, in the knowledge base that the field service technicians use, and on the company’s customer extranet. Which of the technologies discussed in this paper can help address the problem?

It is important to acknowledge that technology is not the only element that enables a company to increase its quality quotient, incrementally and over time. The other factors identified in this paper – people and process, best practices, and industry standards – can be dialed up and dialed down to create a quality quotient recipe that is right for each individual organization. And it is certainly the case that other factors outside the realm of the quality of product-centric content influence customer satisfaction, from the ability to execute product development plans to the logistics of getting the purchased product to the customer’s doorstep or warehouse.

At the end of the day, however, technology is universal, increasingly available to any company, any size, with any budget. As it enables effective multilingual business communication, there is no doubt that the right technology will be a key differentiating factor for successful global companies. Technology can empower knowledge workers with tools, connect processes with data sources that support task completion, replace redundancy and inefficiency, and provide scalability.

At the very least, the quality quotient concept can be used to bring stakeholders to the table and provide a common language for starting the conversation about enhancing quality and impacting customer satisfaction in positive ways.

Conclusions: The Gilbane Group Perspective

Organizations that define, measure, and strive for continuous improvement of information-centric products have a tangible base for a quality-driven global content lifecycle. A quality quotient based on customer satisfaction can enable:

- Reduced content creation and translation costs

- Increased process efficiency

- Decreased time to market

- Simultaneous product shipments

- Increased brand loyalty

- Decreased support calls and inquiries

The need for collaborative processes based on knowledge workers with a shared understanding of corporate objectives is imperative. Connecting people with people is as important as connecting people with content, facilitative processes and technology. And as the saying goes for information, the goal is to connect the right people at the right time for the right task.

One final recommendation: We cannot sufficiently stress the importance of integration as a requirement for effective management of the global content lifecycle. Establishing programmatic interfaces between source and translated content for automated content exchange, enabling flexible, user-defined workflows, and giving project managers visibility into the state of current processes are capabilities that integration of content management and translation process management can deliver to an enterprise. They will be fundamental to success in the Global Information Age.

Focus on Sajan

The Gilbane Group appreciates the contribution of content for this section from Sajan.

Achieving the optimum blend of cost control, time to market and translation quality requires a structured process and a robust technology platform. Virtual collaboration from all global content lifecycle participants is imperative. Quality is a process, not an event. The measure of a provider should not necessarily be a onetime translation. Rather, it should be a broader view of the process and the ability to achieve continuous improvement by way of a seamless and visible environment.

Data is at the very heart of this issue. It is the asset which can serve the authoring and translation content worker. The Language Service Provider of tomorrow extends well beyond a service-only model or use of traditional Translation Memory technology. The innovative providers of tomorrow will offer quality translation services in an easy to access and manage technology environment which empowers the enterprise.

Sajan provides a complete Translation Management System (TMS) in an on-demand model to its global customers. The Global Communication Management System (GCMS) incorporates all the necessary functions of the Global Content Lifecycle and fully supports the concepts presented in the Quality Quotient. Business process automation is properly tied to an advanced multilingual data management technology which offers more than segment reuse at the document level. This technology-centric approach is challenging the traditional approaches and enabling a higher level of return in the form of both financial gain and quality and process improvement. Content authors, translators and consumers share a single on-demand environment.

Improvement cannot be achieved by using 25-year-old technology. Improvement requires a new approach and new tools. It requires a new vantage point, one which covers creation, translation and publication. Maintaining any solution will require a consistent method and toolset. When all process steps and participants are using such a broad based technology, quality and process measurement becomes a simple task.

A key advantage when utilizing an on-demand technology strategy is that it makes getting started so easy. Implementing this solution is measured in days and not weeks or months. Many companies are now enjoying the benefits of a completely seamless solution. The demand for language translation is growing. The importance and value to the business is undisputed. The corporate enterprise can no longer afford to ignore the benefits and with it, the complexities that come with producing and managing global content. A topic this serious requires a more advanced approach and one that is enterprise friendly.

For more information, please contact:

Vern Hanzlik, Chief Marketing Officer

625 Whitetail Blvd.

River Falls, WI 54022

877-426-9505

vhanzlik@sajan.com

http://www.sajan.com

——————————————

[1.] Darlington, Lloyd. 1998. Banking without Boundaries in Blueprint to the Digital Economy: Creating Wealth in the Era of E-Business, edited by Tapscott, D. McGraw-Hill Companies.

Resources Appendix

| Resource | Description |

|---|---|

| ASTM F2575-06 | The Standard Guide for Quality Assurance in Translation from ASTM International that identifies factors relevant to the quality of language translation services for each phase of a translation project. See http://www.astm.org/ |

| BS EN15038:2006 | The first European standard to set out the requirements for the provision of quality services by translation service providers (TSPs). It charts the best practice processes involved in providing a translation service through commissioning, translation, review, project management and quality control, to delivery. See http://www.bsi-global.com/en/Standards-and-Publications/Industry-Sectors/Services/Services-Products/BS-EN-150382006/ |

| DITA | An XML standard that supports single-sourcing across books, help files, training, and multimedia to enable modular, topic-based authoring through rich, semantic markup. DITA incorporates special features for localization, accessibility, and robust conditional processing. DITA version 1.1 is an OASIS Standard. See http://dita.xml.org/ and http://www.oasis-open.org/ |

| ISO 9000 | A series of eleven international standards on quality management systems, developed and maintained by ISO technical committee ISO/TC 176. Standards include ISO 9001:2000 (requirements for a quality management system,) ISO 9000:2000 (fundamentals and vocabulary,) ISO 9004:2000 (guidelines for performance improvements,) and ISO 19011:2002 (guidelines for auditing quality and/or environmental management systems.) See http://www.iso.org/ |

| LISA QA Model | A model to help manage the quality assurance process for all components in a localized product, including functionality, documentation and language issues. Developed through the Localization Industry Standards Association via the OSCAR (Open Standards for Container/content Allowing Reuse) subgroup. See |

| OASIS’ XLIFF | The purpose of the OASIS XLIFF TC is to define, through extensible XML vocabularies, and promote the adoption of, a specification for the interchange of localisable software and document based objects and related metadata. See http://www.oasis-open.org/committees/tc_home.php?wg_abbrev=xliff |

| Segmentation Rules eXchange (SRX) | An XML-based standard for description of the ways in which translation and other language-processing tools segment text for processing. It was created when it was realized that TMX leverage is lower than expected in certain instances due to differences in how tools segment text. SRX is intended to enhance the TMX standard so that translation memory (TM) data that is exchanged between applications can be used more effectively. Having the segmentation rules that were used when a TM was created will increase the leverage that can be achieved when deploying the TM data. See |

| Six Sigma | A disciplined methodology that uses data and statistical analysis to measure and improve a company’s operational performance by identifying and eliminating “defects” in manufacturing and service-related processes. See |

| TermBase eXchange (TBX) | An XML-based standard format for terminological data. This standard provides a number of benefits so long as TBX files can be imported into and exported from most software packages that include a terminological database. TBX was submitted to the International Organization for Standardization (ISO) on February 21, 2007, for official consideration and possible adoption as an ISO standard. See |

| Translation Memory eXchange (TMX) | An XML standard for the exchange of Translation Memory (TM) data created by Computer Aided Translation (CAT) and localization tools. The purpose of TMX is to allow easier exchange of translation memory data between tools and/or translation vendors with little or no loss of critical data during the process. See |

| W3C Internationalization (I18n) Activity | A working group within the W3C that liaises with other organizations to make it possible to use Web technologies with different languages, scripts, and cultures. It ensures that W3C’s formats and protocols are usable worldwide in all languages and in all writing systems. See http://www.w3.org/International/ |